Did Google Actually Make Us Dumber?

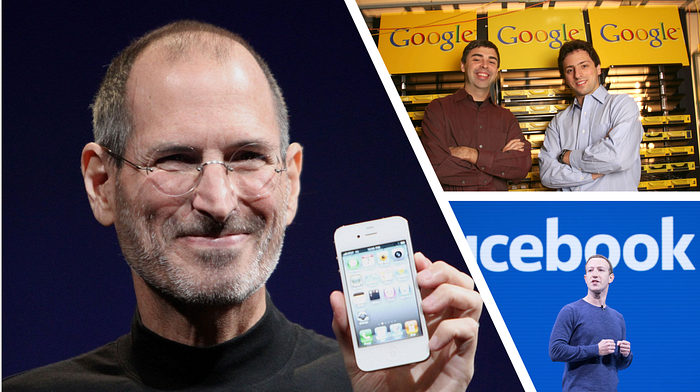

Between 1996 and 2010, three of the most transformative god-like technologies of modern history were invented.

- The Search Engine

- The Smart Phone

- Social Media

These inventions changed the way we lived forever. The search engine allowed us to access an infinite amount of information about anything in seconds. The smart phone provided us infinite tools that transformed the physical world to digital. Social media gave us infinite access to share and stay connected with billions of other people.

During the same time period, we welcomed a new generation — Generation Z. My generation doesn’t remember a time before Google and social media and barely a time before the smart phone.

We are the first digital natives, the first real guinea pigs of this always — connected world.

And like anything else transformative, these inventions changed our society for the better — — but also for the worse.

Today I want to talk about a new 21st century term called “digital dementia.”

…………………………………………………………………………………..

Do you ever scroll on your phone while you’re watching TV without even realizing it?

Or think you hear your phone buzz but you look and there’s no notification?

Or feel like your short-term memory or ability to recall things has gotten worse in recent years?

For many people, this may not just be attributed to pure coincidence or even ADHD. Over the past decade, millions of Americans are starting to suffer from “digital dementia.”

Digital dementia is a term coined by German neuroscientist Manfred Spitzer to describe a decline in cognitive abilities more commonly linked with brain injuries. While research in this field is still nascent, some neuroscience studies have suggested that access to these god-like technologies may be leading to premature cognitive decline, even among youth.

One 2016 study by the NIH “concluded that having available data online may remove the need to commit information to memory.” As a result of having Google readily available with all our answers, we’ve reprogramed our brain to not retain information, but instead to retain where to get the information. If we don’t know something, we Google it.

In some ways, this is just learning to be resourceful. But in other ways, it’s a decline of cognitive stimulation. And the less we use or do something, the less sharp we get. Even our brain can get rusty. David Copeland, an associate professor of psychology and director of the Reasoning and Memory Lab at the University of Nevada, Las Vegas, has even suggested that “because we are using these devices instead of memorizing, our memorization skills might be diminishing.”

And so Google might not be making us dumber, but it’s certainly making our minds slower and intellectually lazy.

A similar trend in cognitive decline is linked with the rise of media multitasking, the act of engaging on multiple media devices at once. Some examples include scrolling through social media while watching TV and instant messaging while playing video games. Broader examples include surfing the internet during class or watching YouTube while eating at the dinner table.

The average child between age 8–18 nowadays spends about an average of 10 hours on screens per day, 29% of that time media multitasking. And some recent studies have shown a direct link between media multitasking and exhibiting symptoms like inattention and hyperactivity-impulsivity.

Humans were not programmed to multitask all the time, so when you spend 3 hours a day doing it, it makes sense that we’ve seen a decline in concentration ability across the board.

According to experts on cognitive function, “The occipital lobe in the back of the brain processes visual signals such as visual cues from a video game, social media or TV program. While seated and engaged with technology, the front part of the brain including the frontal and parietal lobes, are under-stimulated. These regions of the brain are responsible for higher order thinking and good behaviors such as motivation, goal setting, reading, writing, memory and socially appropriate behaviors.”

For children, this lack of cognitive stimulation in their crucial development years may increase the chance of early onset dementia, which have symptoms that include development delays, lack of movement, poor posture, and the inability to recall memories.

The National Center for Biotechnology Information “predicts that from 2060 to 2100, the rates of Alzheimer’s disease and related dementias (ADRD) will increase significantly, far above the Centres for Disease Control (CDC) projected estimates of a two-fold increase, to upwards of a four-to-six-fold increase.”

There’s no doubt that these three god-like inventions have changed the world for the better. But there’s also no doubt that they have also directly affected our cognitive abilities and long-term development for the worse.

Is there a world where both sides coexist? Absolutely.

Consuming educational content to match our consumption of quick-dopamine entertainment content. Taking technology detoxes and setting boundaries of where and when technology can be consumed Practicing mindfulness and brain stimulation exercises. These would help us on an individual live a more balanced life in a technological world.

But for now, the power of these technologies have overrun our society faster than we can adjust.

Renowned American biologist Edward O. Wilson summed up the dilemma we face best:

“The real problem of humanity is the following: We have Paleolithic emotions, medieval institutions and godlike technology. And it is terrifically dangerous.”

*Thanks for reading and following the Tech as a Tool Project. This content is part of the 6-week LinkedIn Accelerator incubator program where I tackle our society’s complex relationship with technology.

Originally published at https://www.linkedin.com.